Truth about AI is both scary and funny.

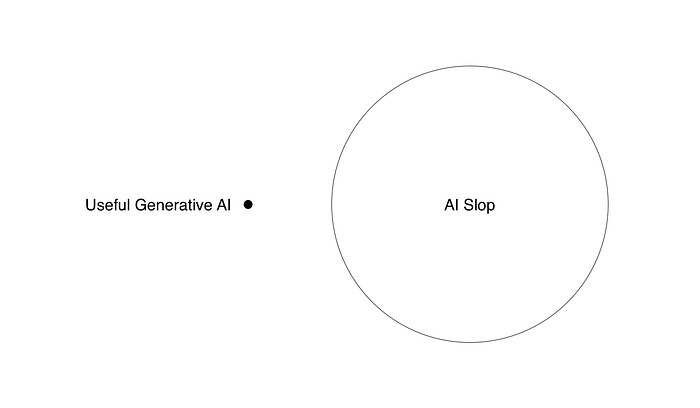

Can AI be useful and useless at the same time? How does it work?

We are living in an AI powered economy.

But when you hear AI powered, you may get a false impression that AI is moving the economy forward through innovation.

No. That's totally not the case. It may have been like that briefly, when people first started talking with a chatbot that felt like magic. But that was years ago.

When it comes to mass adoption, a farting Italian Greyhound or a burnt toast emoji are likely on top of the list. We'll get to that.

Let's focus on why AI companies pay each other to inflate their prices. Which notable companies are NOT in that circle. What financial metrics look alarming and what AI is actually good for outside of inflating stock prices of AI firms.

I'll also share some hilarious, yet scary examples of AI misuse around my professional work.

And then we'll talk about why shining a light over some glass panels got creatives so excited.

MIT report 2025: 95% of generative AI pilots at companies are failing to deliver any positive results

The Lazy Human Problem

Most businesses now claim they're "AI powered" at least to some degree.

If you look at the stats, it actually may be the exact opposite. More and more companies report decline in output quality and deadlines with AI adoption. It all runs on an empty promise that someday AI may actually get things going for real.

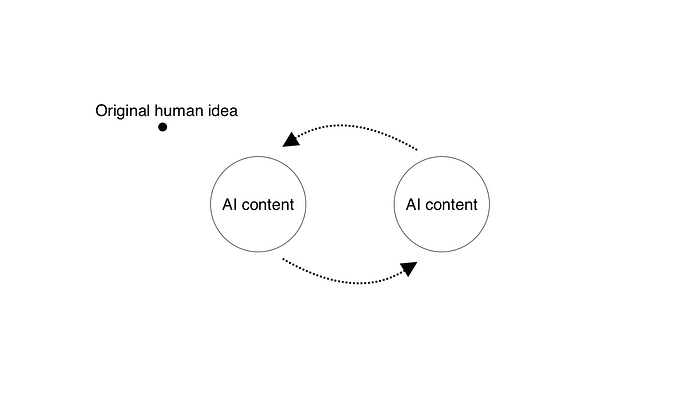

Scientific papers were written with AI, and then peer reviewed using AI. And of course AI didn't pick up on mistakes and omissions made by AI. Now that is also starting to become banned in academia.

AI-generated inaccuracies in review tools, flawed benchmarks that exaggerate AI capabilities, a rise in poor-quality papers due to AI misuse, and the propagation of errors like a nonsensical scientific term that slipped through peer review.

It all stems from the fact that we really, really want AI to be good enough. We don't want to think, but instead get the best results delivered to us. That inherent laziness makes us put a blind eye on the shortcomings of the technology.

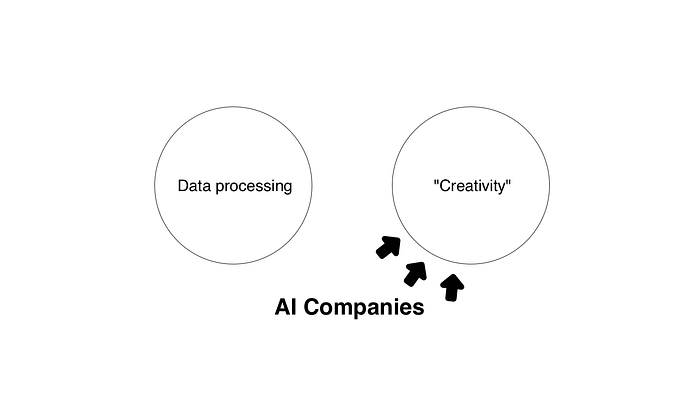

Which way modern AI company? Well… that seems to be decided.

We're using it wrong

For some reason, AI companies decided "generative" is the most enticing approach to win over consumers. It means models and systems designed to generate new content or data that resembles human-created outputs.

Instead of taking over the cumbersome work, it takes away the creative side. The part of every job that's the most fun and engaging. That's a recipe for a disaster right there!

AI was already great at data parsing and pattern recognition way before Chat GPT came along. And it is being used like that still.

New scientific breakthroughs around health and longevity are now appearing almost weekly. AI-driven protein folding via AlphaFold has accelerated new drug discovery.

But research is not a wide enough spectrum. Sure, it's great we can move science forward, but that won't sustain the power hungry AI train.

It desperately needs regular Janes and Joes prompting away.

And in the increasingly AI world, many designers I know move to designing on paper to boost creativity.

The AI reality 2025

Right now the AI world is a weird financial mechanism running on a handful of use cases that aren't really for the masses. That leads to big consulting firms delivering AI-generated (and full of errors) reports to their customers.

AI adoption was pushed at companies big time, yet resulted in 95% of them showing either no improvement or decline in outputs.

Events like huge consultancies misusing AI out of laziness or cost-cutting only undermine both their value AND the value of any kind of output.

Whatever you send to your client now, they'll try to see whether you actually made it yourself. That causes distrust, which means customers don't want you to "generate stuff" for them.

That pullback is already happening in many industries.

It looks "too AI generated", let's change it!

AI didn't really replace high-skilled workers. It augmented the ones that were suited for it. The ones that took the extra effort. The ones that don't really need AI in the first place.

But as for everyone else?

The lower-skilled employees are usually not ready to be "AI powered". They lack the fundamentals of AI oversight, which leads to smaller and larger disasters.

It seems like the weakest links of a business get more productive, but making sure it's useful work requires even more energy.

It's a loop of incompetence posing as ultra-productivity. This may fool managers for a quarter or two, but they will finally catch up to all the errors and quality drops.

And it seems that is exactly what's happening now in businesses that "adopted AI".

Design doesn't have to follow the same patterns

AI is useful but…

Yes, I use AI when coding my mobile apps. And when done right, AI can be amazing at this. But the promise of "vibe coding" is also misleading. The only reason I made and released a mobile app, is that I understand code. Not well enough to fully write it myself, but enough to rework parts of it by hand.

All the UI in my apps was done without any AI influence, which is also why my apps don't have that "generic AI look". I'm now also in the process of swapping remaining AI generated images into real photos.

Real photos (right) always work better than AI placeholders (left)

The promise of just say what you want and watch the magic happen is far from reality.

Coding help isn't a use case that'll dramatically change the world. I am making small, self-contained, no-backend products on purpose. I know that going beyond that poses huge risks and is simply not worth it.

That means these AI-backed products need to be envisioned with that in mind from the start.

I make my apps with love and attention to detail. AI is used only where necessary.

Generative visuals

AI can also help with image editing. Generating some assets to be later used by a professional to merge into a final thing. AI cannot reliably "generate" high quality visual outputs. And flashing your lifelike photos or Hollywood style videos made with AI won't change that.

The problem with AI visuals is not even in the uncanny valley feel, as that is less and less of an issue now. It's in the fact that those AI visuals are made by people with low visual understanding. And that shows clear as day.

How to be the best at using AI?

The best AI work is done by people who would be able to still do the same work if AI never existed. Yet, they all decide unanimously to only use AI for some of the building blocks and opt for a lot of manual work on top of that.

The masses? The low-skilled people? They embrace the ability to "generate stuff". That's why we see so much slop nowadays.

It's everywhere. In social media posts, YouTube videos, blog posts. Everyone now feels like their quality level went up, and maybe it did, but it's still low.

In design, there's still a trend of AI generated hero images with nonsense copy and being all proud. That's work that took less than 5 minutes and only looks impressive at first glance. And often not even that.

The low effort led to oversaturation of low quality. And that happens across the board.

Complexity kills gains

Once you run into complexity with AI, you end up crashing the entire AWS infrastructure just days before 13,000 engineers were fired.

This is where we're at.

Optimization without regard for safety, reliability or quality. It does bump perceived productivity. Objectively more work is done, even if it's low quality.

"Computer does code" is still magical enough to higher ups in corporations.

While regular office workers quietly fear being replaced by AI, we the tech-people usually try to use it to maximize our output. But almost everyone fails to take a step back and see what's happening.

AI is a bubble

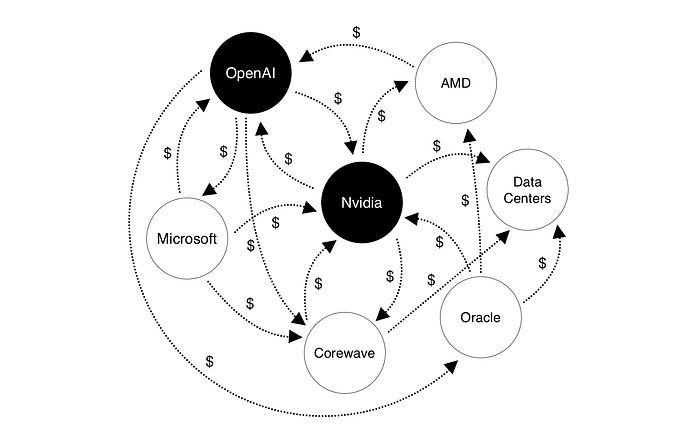

This graph has been circling around the internet for a few days now. It's a couple of AI and AI-adjacent companies circulating money between each other to inflate their valuations.

This financial future is not fueled by AI. AI is just an excuse to have a play with investors. And it's looking more and more like a dead-end.

OpenAI released Codex, a very powerful coding model, and then… the next thing they announced was AI erotica. Seriously?

Creating an AI-slop fest Sora app was also just a distraction. Look, we're building stuff! We're shipping! Doesn't matter if it makes sense in the long run, but at least we've done it!

When you combine it with those odd money operations, it's pretty clear there is currently no breakthrough. If we were any closer to AGI, they'd be focusing all their efforts on this instead of AI erotica.

All those smoke and mirrors pseudo-products are only there to have the money flow going a little longer.

The big AI Short

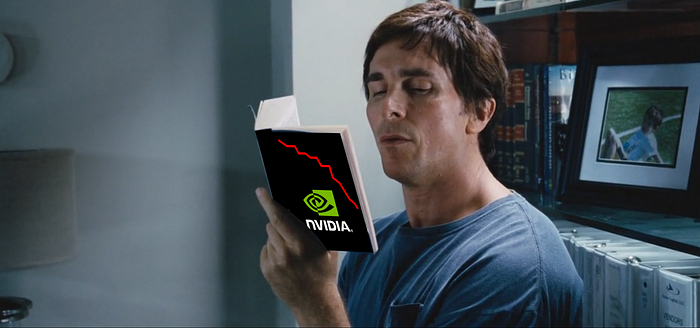

Dr. Michael Burry, famous for predicting the 2008 housing crisis is saying the AI bubble is 1999 dotcom crash multiplied. He shows that fake promise it all stands on, and money swapping hands to show investments going on.

He's shorting both Nvidia and Palantir for over $1.1B. Warren Buffet sold most of his portfolio and is sitting on a huge pile of cash. Most large scale investors are cautious.

A lot of the money in AI companies is now money that they send to each other.

Burry went on to say that in the next 2 years the AI tech companies will likely loose up to $176B in valuations.

A graph showing how Burry predicts the market to go

Apple's quiet rebellion

But did you notice Apple isn't really on that chart? With all the money in the world, they likely wouldn't sleep on this, right? They'd invest in NVidia or poach the best engineers and build an LLM. They surely can afford it.

Why didn't they take the step? Well… It's definitely a different company than the one Steve Jobs led, but the culture he instilled mostly remains.

Apple is not fully commited to AI because…

Most AI is used for slop creation

AI is useful, but useless

What Apple really needs is a slightly more functional Siri. They don't need full generative capabilities. They don't need a model to make videos or photos.

They already have powerful AI that affects every photo you take on your iPhone. There has been AI at Apple for way longer than Chat GPT exists.

And they're only pursuing a handful of usecases. Not all of the popular ones.

Currently the ability to just make some weird emojis in context of a conversation is innocent enough. For instance, I wanted to make a joke about Morty. He's an Italian Greyhound that loves everything smelly. So I made an emoji of him farting. Same with burnt toast, as a reply to one indie hacked asking me if he cooked.

End of creativity?

Replacing creatives with machines? Steve Jobs always said a computer is like a bicycle for the mind.

Apple has always put creatives front and center. They're the lifeblood of the company culture. Sure, it's become mainstream now and they're no longer the main target market.

Apple doesn't want to be associated with AI slop.

That's also why, recently they showcased a behind the scenes video of the new Apple TV logo animation. It was done using real glass panels, manual lighting and some post-processing. No AI.

Sure, it also is clever marketing to go against the trends, but it also reminds us a bit about Apple culture.

And sure, stuff like that CAN be done without the manual process. It can likely be done with AI with enough prompting. But showing they didn't use it is a quiet statement.

It says: we're not like the others.

Apple is waiting for AI to make sense. And I think it's refreshing that they're not racing blindly into the bubble.

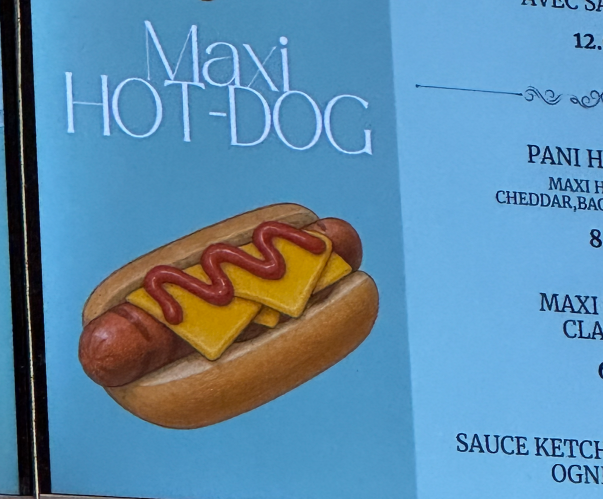

A clear ChatGPT generated image in a Normandy cafe menu

How people use AI

Sadly, the most outward facing usecase is replacing human creativity or even just plain human thought. I noticed most people when they need to answer a kickoff questionnaire for a redesign of their website, they ASK CHAT GPT to write their company values.

I've seen marketing managers put an entire marketing plan into AI and then reply with ultra-em-dashed lists pretending that it's their original thought.

Overall the quality of outputs dropped, but at least it's now faster.

One person writes a plan with AI. Other people use AI to give feedback to that plan. Then the "author" uses AI to reply to that feedback.

People read less, understand less. But faster.

This is creating an army of mindless, brainless, corporate zombies. Ones that Apple as a brand definitely doesn't want to be associated with.

Original human ideas are scarce

AI needs a reset

Artificial Intelligence is not all bad. It can boost both quality and productivity. But not in its current form. Only a handful of people utilize that potential. Developers. True artists (not "AI artists"), some Designers. Researchers.

And while that happens the rest of the world makes slop that doesn't justify the existence of AI. They're also not willing to pay more than $20 for the privilege. Which can only speed up the bubble popping.

The models seem to have ran into a wall regarding the quality and error detection. That's why OpenAI announces silly things like Sora social platform, the "everything apps" or AI erotica.

It shows there's no plan or vision, just extending the bubble a little longer with smoke and mirrors and hoping for the best.

I wonder, if ChatGPT gave them that strategy…

Where will you be when your favorite chatbot goes offline? Still able to think and reason on your own?

What's Your Reaction?

Like

0

Like

0

Dislike

0

Dislike

0

Love

0

Love

0

Funny

0

Funny

0

Angry

0

Angry

0

Sad

0

Sad

0

Wow

0

Wow

0

![[VIP] Unlimited Pass 2026.03.27](https://i.pinimg.com/1200x/d2/f8/2e/d2f82e903b9ca33b0f13704cc85a3d8a.jpg)

![[LS] ls.graphics Pass 2026.02.16](https://i.pinimg.com/1200x/8d/ca/7f/8dca7ff72d8b698f955649340d0ff398.jpg)

![[PRO] Craftwork Pass 2025.06.11](https://i.pinimg.com/1200x/98/d2/f0/98d2f0169226b431f4727441ecc6aa06.jpg)

![[VIP] Prismé Framer Template](https://i.pinimg.com/1200x/31/cd/ed/31cdeda383c0f3d765425847c27021aa.jpg)

![[VIP] Creovo: Creative Portfolio](https://i.pinimg.com/1200x/92/5d/39/925d39b614ae4e39adda25b73837f82b.jpg)

![[VIP] Voltz: Electric Car Website Template](https://i.pinimg.com/1200x/03/ba/41/03ba41513483727fcd26b95349750783.jpg)

![[VIP] Zyra: Coded Chat AI Dashboard](https://i.pinimg.com/1200x/ce/7b/92/ce7b926f22423fc046659dfe1dd7a604.jpg)

![[$] AlignUI: Code Library](https://i.pinimg.com/1200x/8d/91/1c/8d911c0a22483842cff69c130e80c37b.jpg)

![[VIP] The Grid Deck Template](https://i.pinimg.com/1200x/f2/df/6d/f2df6d865d31ed4400ddd74137a5a79e.jpg)

![[VIP] Solaris: Sales Forecast & Pipeline Review Deck](https://i.pinimg.com/1200x/ba/7c/48/ba7c485ac40a51054cf9074aead204e2.jpg)

![[VIP] Brand Guideline Presentation](https://i.pinimg.com/1200x/64/87/a7/6487a7c4da21072150a1664f83a6a234.jpg)

![[VIP] 44 Device Mockups: Metal Scene Pack](https://i.pinimg.com/1200x/96/0c/c4/960cc4d39f6f9f08c4ba4a40ae740a65.jpg)

![[LS] iPhone 17 Mockup](https://i.pinimg.com/1200x/18/42/c1/1842c11e3da971765bdcfbc5315f3df8.jpg)

![[LS] iPhone 17 Pro Max Mockups](https://i.pinimg.com/1200x/f0/2a/72/f02a724ed9f52ac4a1c66b5614809111.jpg)

![[LS] AE-Mockups, Apple Devices](https://i.pinimg.com/1200x/03/04/9b/03049ba79acaa546ae6389639f89bcc1.jpg)

![[VIP] React Three Fiber: The Ultimate Guide to 3D Web Development](https://i.pinimg.com/1200x/78/02/1f/78021ffdfc8113cc8caba5b2c563ead4.jpg)

![[VIP] Ryan Hayward: Ultimate Framer Masterclass 3.0](https://i.pinimg.com/1200x/48/d6/3f/48d63f9723d7c49e6c34c182557c7431.jpg)

![[VIP] Whoooa! 156 vector Lottie animations](https://design.rip/uploads/cover/blog/whoooa-156-vector-animations.webp)

![[VIP] Staff Product Designer (ENG, RUS)](https://i.pinimg.com/1200x/0c/52/a0/0c52a08d8b0a25329806437933cf538f.jpg)

![[VIP] Memorisely: AI Design System](https://i.pinimg.com/1200x/00/31/78/00317811d7cda9792e12b379e96b6c88.jpg)